Users

Home Buyers, Sellers, Real Estate Agents, Internal Product & Data Teams

Industry

Real Estate / PropTech

Product Stage

Production-Grade Data Platform (Foundational Infrastructure)

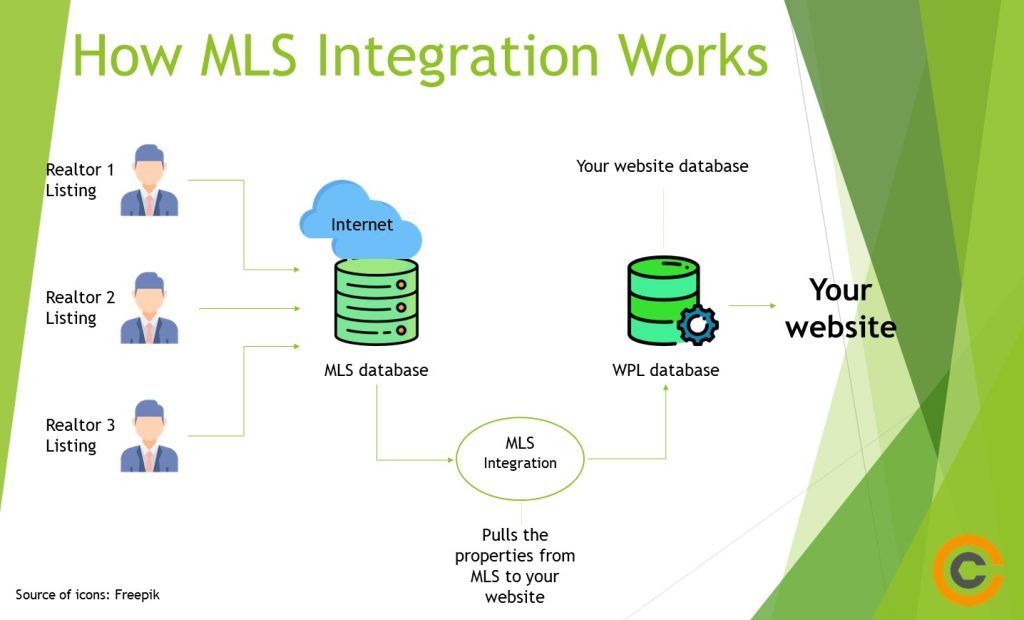

MLS API Integration Platform

MLS data is both incredibly valuable and tightly constrained. While it appears externally as a listings feed, in practice it is a complex ecosystem of data contracts, update semantics, compliance rules, and operational edge cases.

This work focused on building an MLS API integration platform that could reliably ingest, normalize, and serve listing data at scale, while respecting regulatory boundaries and enabling downstream product capabilities such as search, pricing models, and market analytics.

The challenge was not access alone, it was building a sustainable, compliant data foundation.

Context and Scope

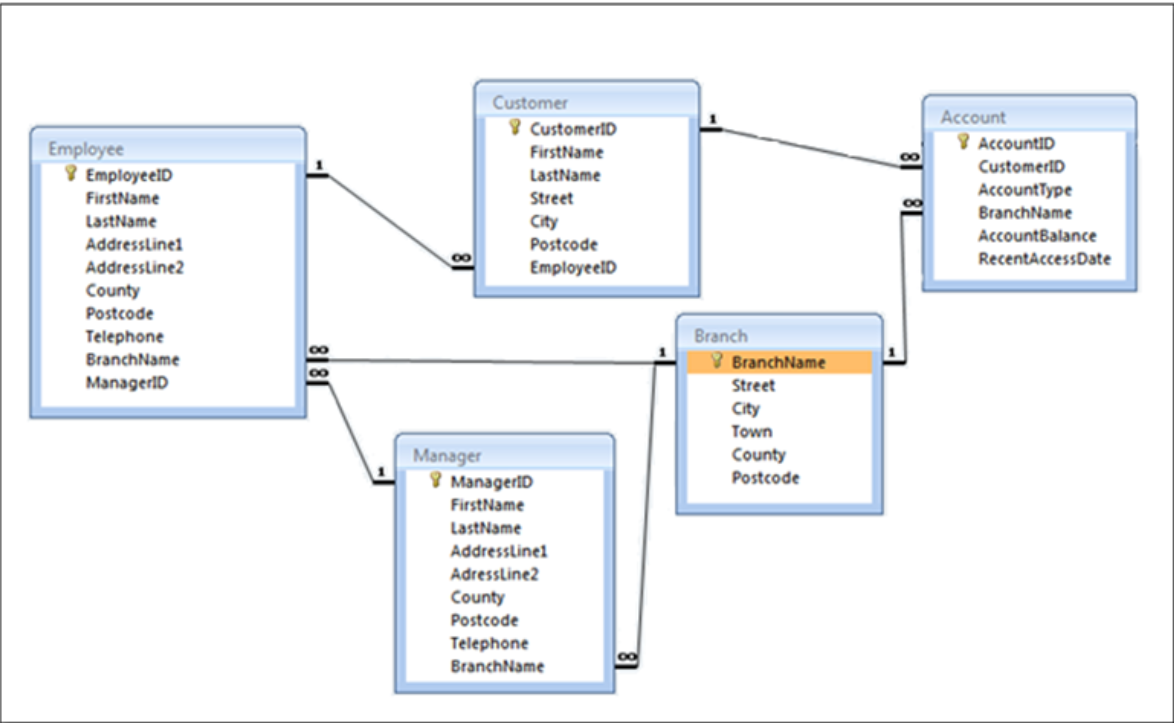

The platform depended on MLS data as its primary source of truth for active, historical, and off-market listings across multiple regions.

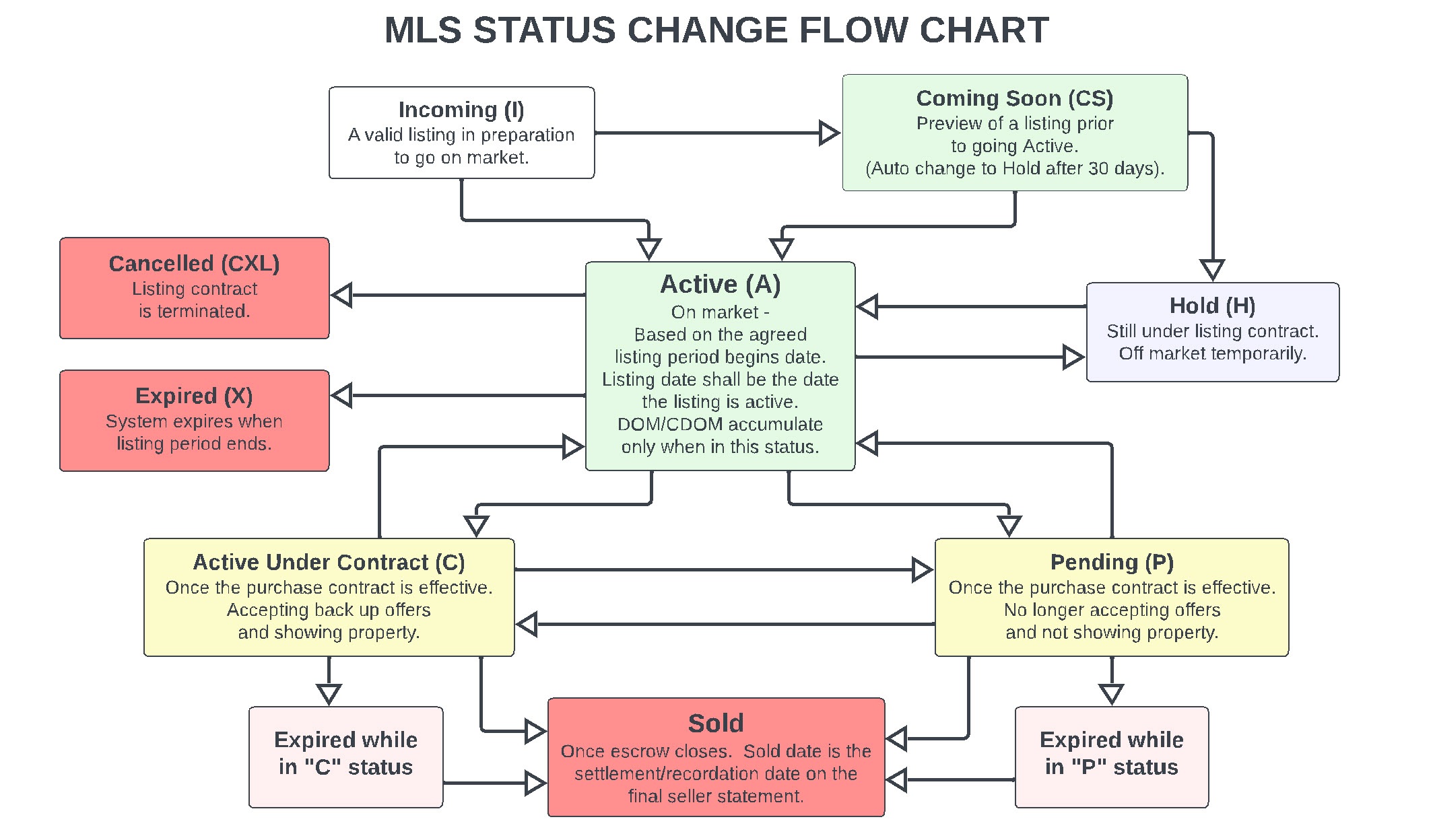

MLS feeds varied widely in structure, update frequency, and data quality. Listing states changed frequently, often with partial updates rather than full refreshes. Media assets, property attributes, and status transitions required careful handling to avoid stale or inconsistent user experiences.

At the same time, MLS data came with strict usage rules around display, attribution, and downstream use, making compliance a first-order product constraint.

The Problem

Raw MLS feeds were not product-ready.

Data arrived in inconsistent formats, with missing or conflicting fields, region-specific conventions, and ambiguous lifecycle events. Without normalization and validation, downstream systems would interpret the same listing differently depending on timing or source.

There was also no clean separation between ingestion concerns and product consumption. Tightly coupling MLS feeds directly to user-facing features would make the system brittle, hard to evolve, and difficult to audit.

The core problem was designing a platform that could abstract MLS complexity, enforce consistency, and still remain flexible enough to support evolving product needs.

My Role

I owned the end-to-end product strategy for the MLS integration platform.

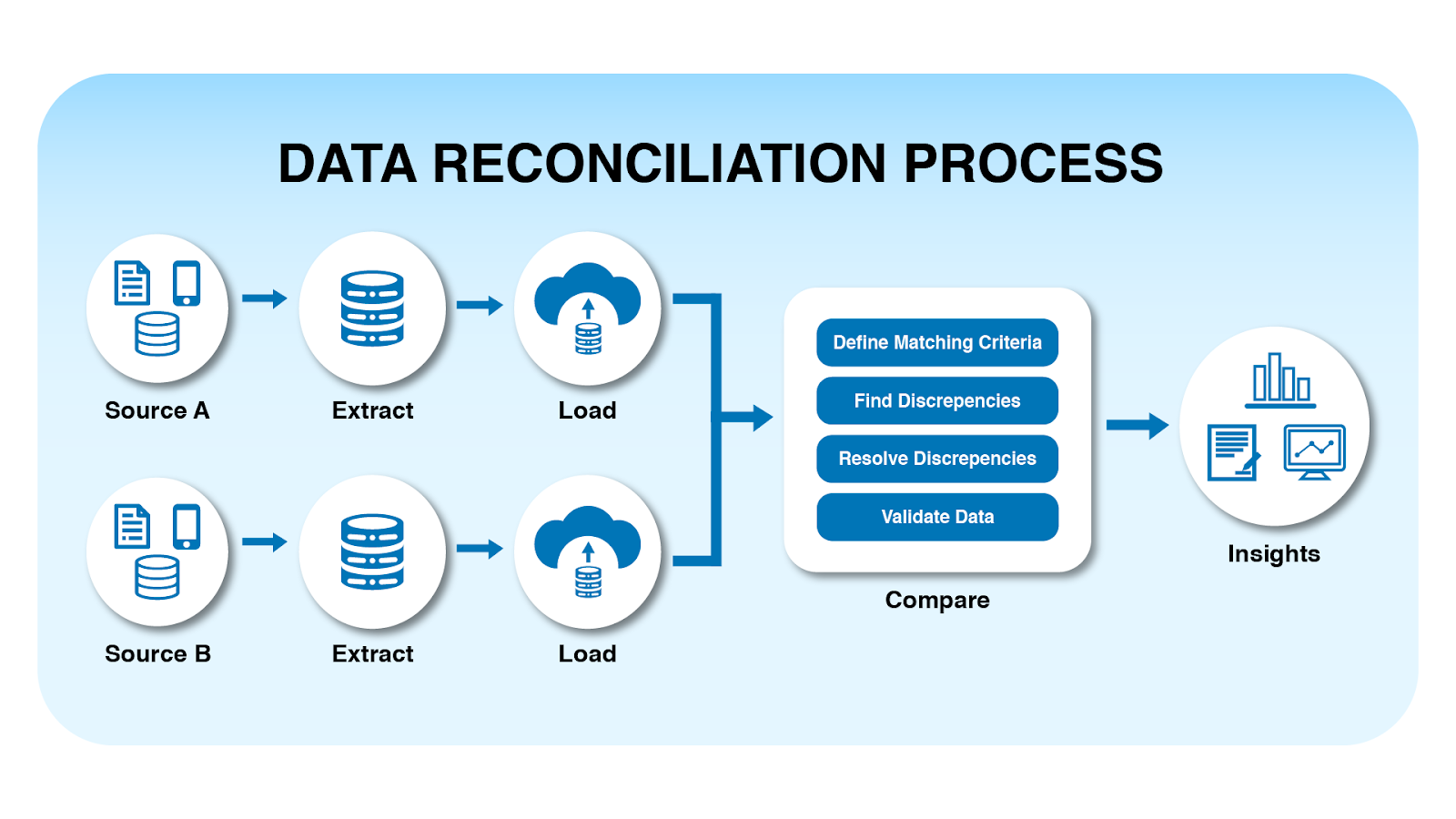

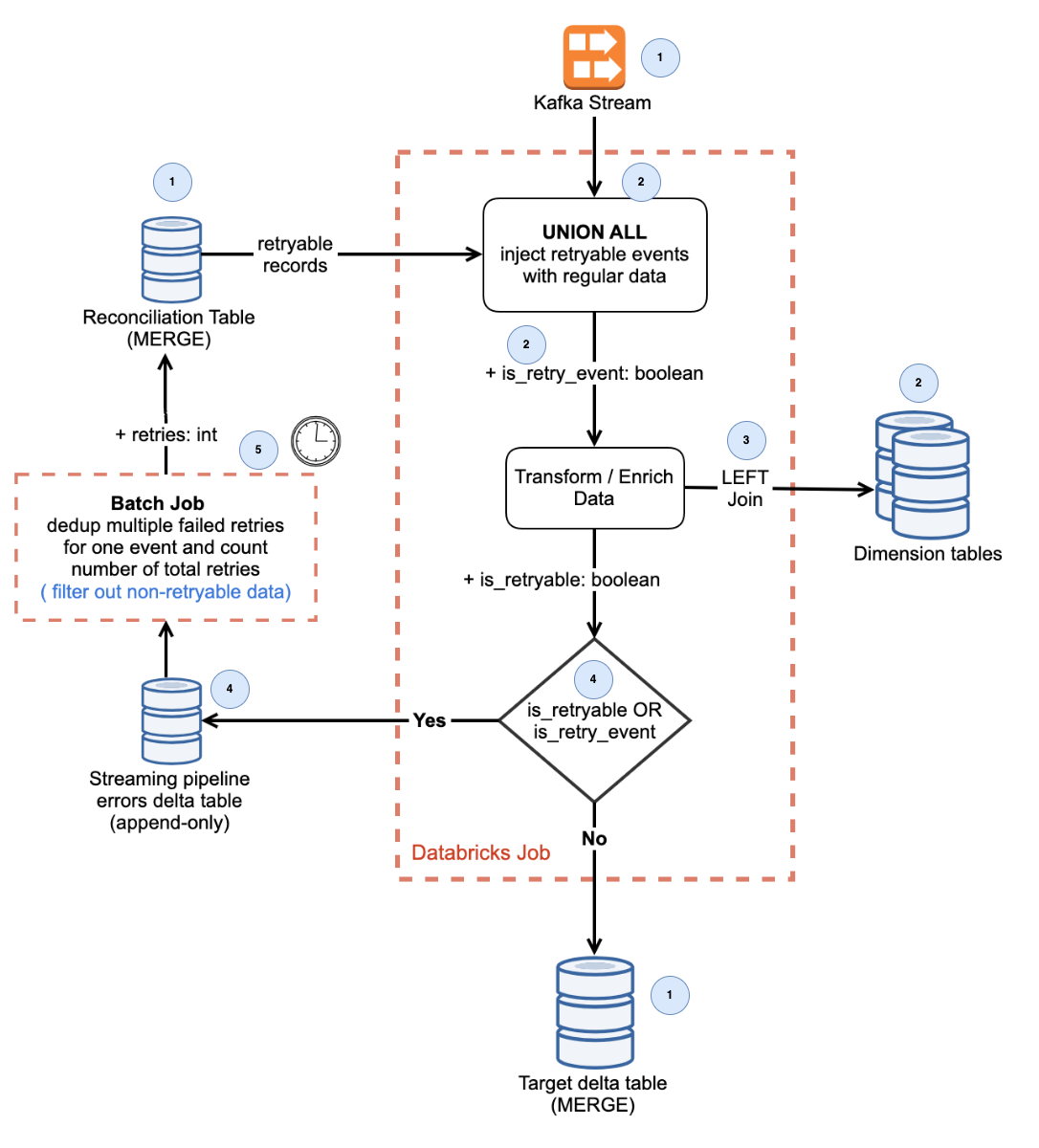

That included defining ingestion workflows, data contracts, normalization logic, and lifecycle models for listings. I worked closely with data and engineering workflows to ensure the platform could handle incremental updates, reconcile conflicting signals, and surface reliable state transitions.

Equally important, I owned decisions around what MLS data could and could not be used downstream, ensuring that analytics, AI models, and user experiences remained compliant with usage rules while still delivering value.

Platform Architecture & Product Decisions

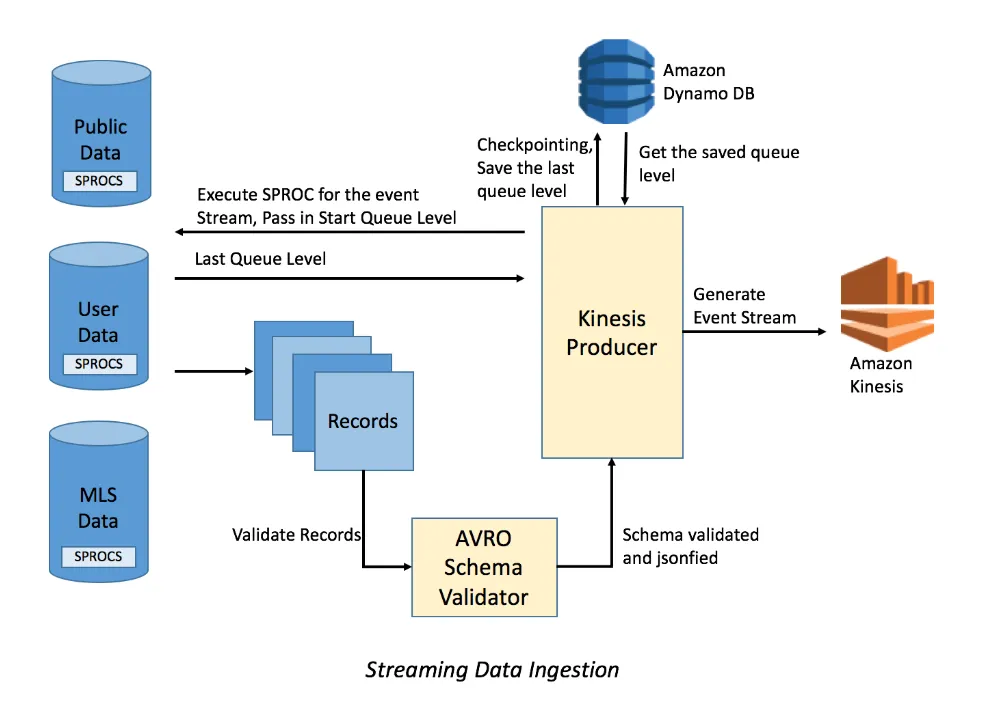

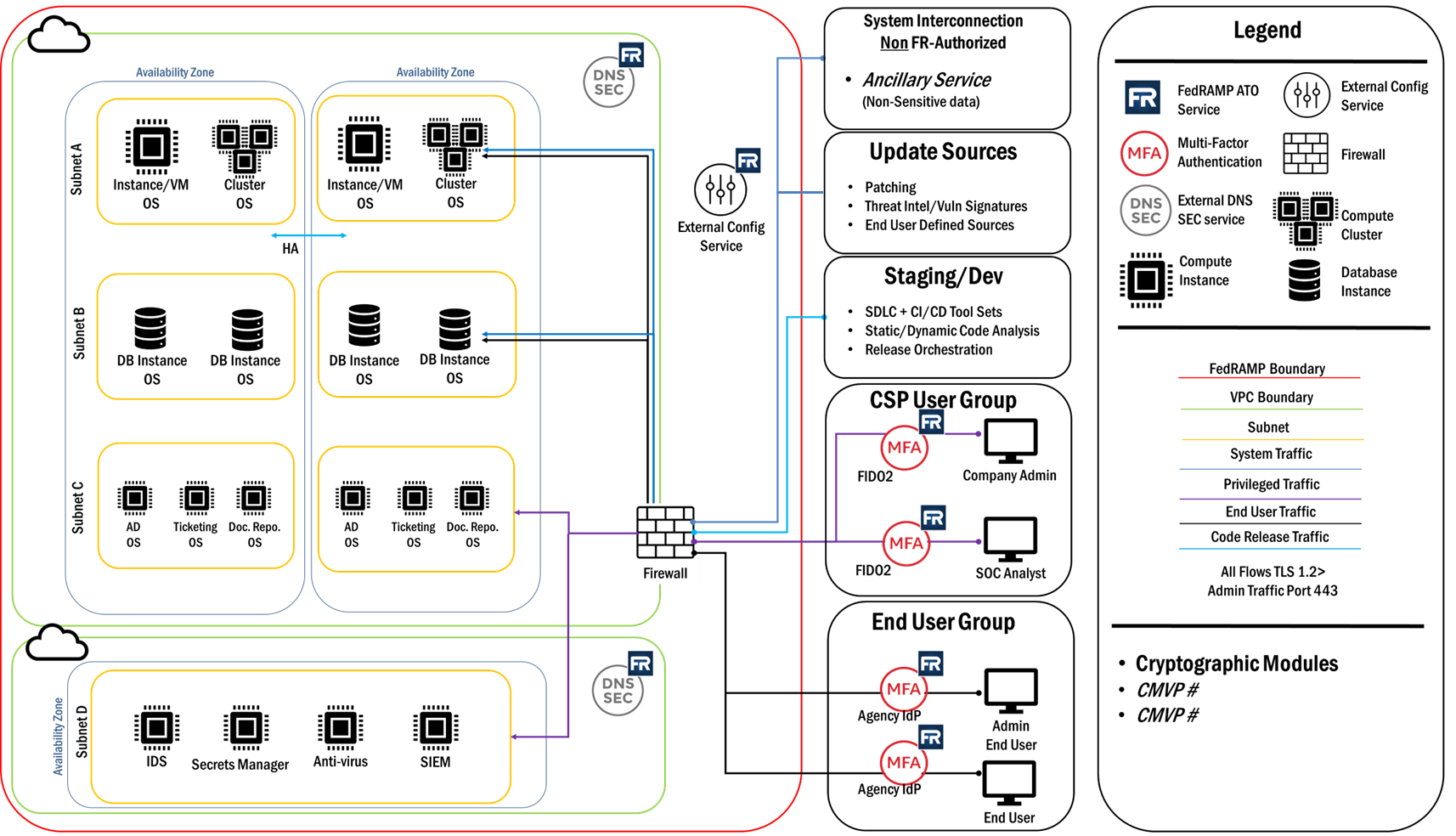

Rather than treating MLS feeds as a single pipe, the platform was designed as a layered system.

Ingestion pipelines handled feed-specific parsing and update semantics. A normalization layer mapped disparate schemas into a consistent internal representation. Lifecycle logic determined how listings moved through states such as active, pending, terminated, and sold.

Downstream consumers search, pricing models, behavioral analytics, interacted with MLS data through stable internal APIs, decoupling product evolution from upstream feed volatility.

This separation allowed new product capabilities to be built without reworking ingestion logic and made compliance enforcement explicit rather than implicit.

Data Quality, Freshness & Trust

Real estate data is time-sensitive.

Small delays or missed updates can materially affect user trust. At the same time, aggressively processing every update risked instability and unnecessary load.

To balance this, the platform incorporated validation checks, change detection, and prioritization logic that ensured meaningful updates propagated quickly while noise was filtered out. Data freshness was monitored as a product metric, not just an operational concern.

Trust in listing accuracy was treated as a core UX requirement.

Risks

MLS integrations fail quietly.

Stale listings, incorrect statuses, or missing media degrade user confidence over time rather than triggering obvious failures. Compliance missteps can create legal or contractual exposure.

Managing these risks required clear ownership of data semantics, explicit handling of edge cases, and conservative defaults when signals were ambiguous.

I deliberately prioritized correctness and traceability over aggressive optimization.

Go-To-Market

The MLS integration platform was not marketed directly, but it underpinned nearly every customer-facing capability.

Its value was expressed through downstream features: more reliable search results, accurate pricing insights, timely status updates, and trustworthy analytics. Internally, it enabled faster iteration by providing a stable data foundation for experimentation and AI-driven features.

From a GTM perspective, MLS reliability became part of the platform’s credibility — a prerequisite for adoption rather than a headline feature.

Outcomes

The platform provided a consistent, compliant, and scalable foundation for listing data across regions.

Product teams were able to build higher order capabilities including pricing models, buyer behavior analytics, and insights without re-implementing MLS logic. Listing accuracy improved, and user trust increased as stale or inconsistent data was reduced.

Most importantly, MLS integration shifted from a fragile dependency to a durable platform capability.