Users

Risk Operations Teams, Fraud Operations, Compliance & Regulatory Teams, Payments Product Teams

Industry

Banking / Payments / FinTech

Product Stage

Production-Grade AI Decision Intelligence (Internal, Risk-Critical)

AI Based Transaction Anomaly Detection & Explainability

Not every unexpected transaction is fraudulent, but every unexpected transaction carries risk. In large-scale payment systems, meaningful risk often emerges before it becomes fraud as shifts in behavior, deviations from established patterns, or structural changes in transaction flows.

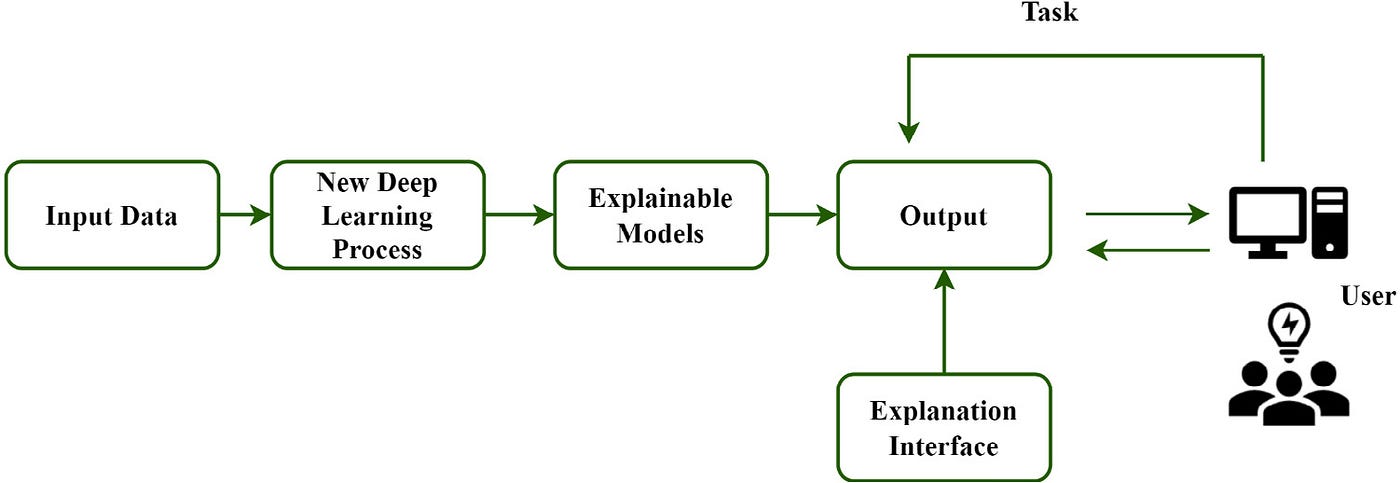

This product focused on building an AI-based anomaly detection and explainability that could surface unusual behavior across transactions, customers, merchants, and corridors, and explain why something looked different without immediately enforcing a decline or disruption.

The goal was understanding first, action second.

Context and Scope

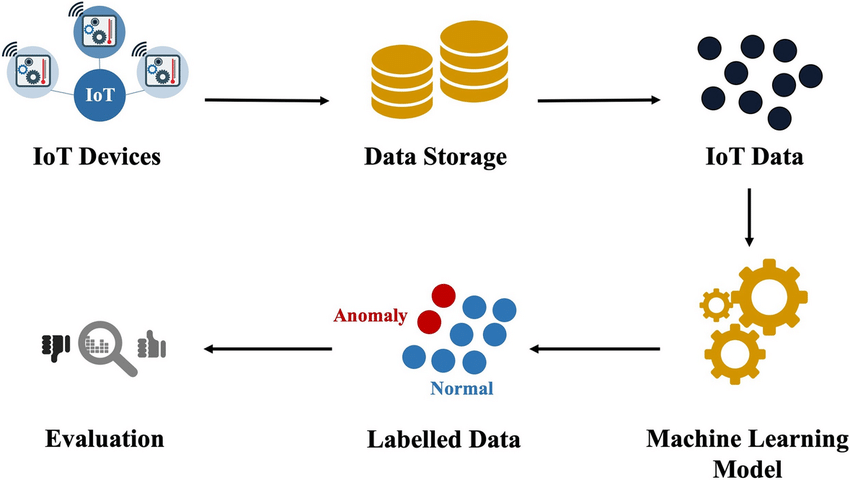

The platform operated in a high volume payments environment where millions of transactions flowed across cards, accounts, geographies, and merchants in real time.

Traditional fraud systems were optimized for known bad patterns and real-time enforcement. However, many operational and risk issues manifested earlier as anomalies: sudden spending shifts, merchant behavior changes, corridor-level spikes, or customer patterns that didn’t match historical baselines.

These signals were visible in data, but not structured into a system that product, risk, and operations teams could interpret consistently.

The Problem

Unexpected behavior was treated as noise until it became a problem.

When anomalies were detected, they were often buried in logs, surfaced without context, or immediately escalated into enforcement actions that increased false positives and customer friction. Risk teams lacked tools to understand why something was anomalous and whether it warranted intervention.

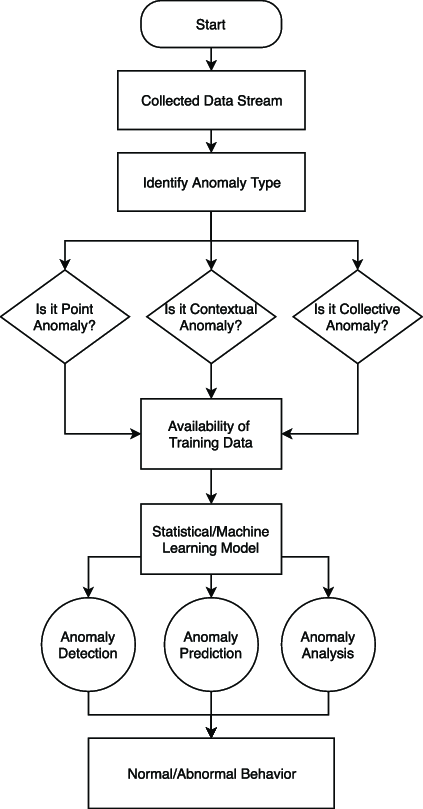

The core problem was designing a system that could:

-

Detect novel and contextual anomalies

-

Explain deviation in human-understandable terms

-

Support different downstream actions beyond “block”

-

Operate safely alongside real-time fraud systems

This required diagnostic intelligence, not just classification.

My Role

I owned the end-to-end product strategy for the anomaly detection and explainability platform.

That included defining what constituted an anomaly at different levels (transaction, customer, merchant, corridor), deciding which signals were appropriate for unsupervised or semi-supervised modeling, and shaping how explanations should be generated and consumed by different stakeholders.

A key part of my role was designing the interaction boundary between this platform and real-time fraud systems ensuring anomalies informed decision-making without automatically triggering enforcement.

Modeling & Explainability Decisions

Rather than relying on static thresholds, the platform established dynamic baselines that adapted to context.

Behavior was evaluated relative to historical norms for a given entity — such as a customer, merchant, or region rather than global averages. This allowed the system to identify deviations that were meaningful in context, not just statistically rare.

Explainability was treated as a first-class product requirement. When an anomaly was surfaced, the system highlighted contributing factors such as deviation magnitude, temporal shifts, peer comparison, or sudden structural changes. The intent was not to justify a decision, but to enable understanding.

Importantly, the platform avoided labeling anomalies as fraud. It focused on what changed and how significantly, leaving interpretation and action to downstream systems or human operators.

Managing Risk & Regulatory Sensitivity

Anomaly detection systems carry subtle risk.

Overreacting to anomalies can create unnecessary disruption. Underreacting can allow emerging issues to propagate. Explanations that are too opaque undermine trust; explanations that are too prescriptive overstep the role of diagnostic intelligence.

To manage this, anomaly outputs were gated by confidence and stability checks. Low confidence signals were flagged for observation, while higher-confidence patterns were escalated with contextual explanation rather than action mandates.

This positioning aligned well with regulatory expectations around transparency, proportionality, and human oversight.

Go-To-Market

The platform was positioned internally as decision intelligence, not fraud prevention.

It was introduced as a way to help risk, operations, and product teams understand emerging patterns earlier and respond more thoughtfully. Rather than replacing existing systems, it complemented them by adding visibility into behavior that traditional rules and models struggled to capture.

Adoption focused on internal workflows, where explainability and trust were more important than automation speed.

Outcomes

The platform improved early detection of behavioral shifts and emerging risks, reducing reliance on reactive investigations. Teams were able to distinguish between benign changes and signals that warranted escalation, improving response quality without increasing customer friction.

Most importantly, it created a shared language around why behavior looked unusual, strengthening collaboration between product, risk, and operations teams.