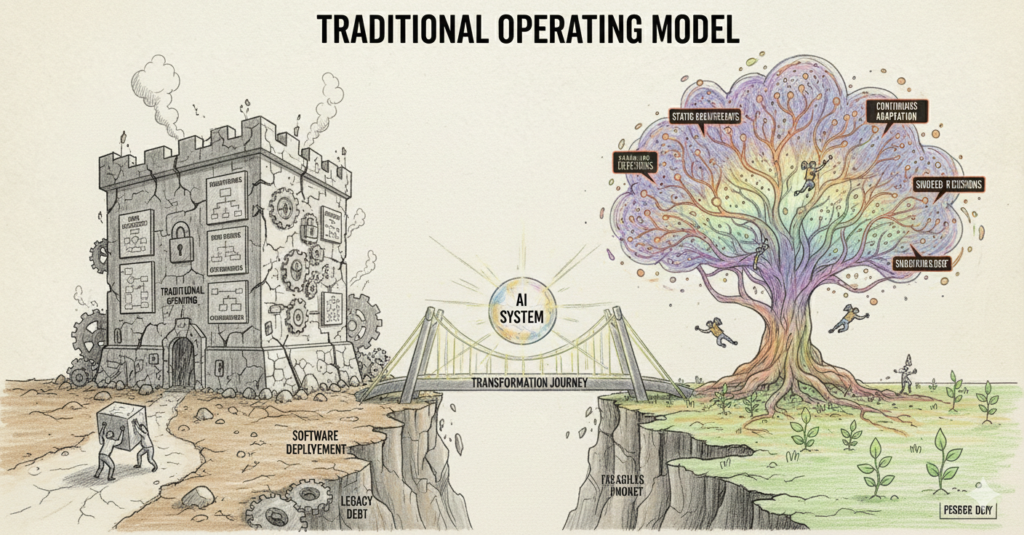

AI Transformation Is Not a Model Problem, it’s an Operating Model Problem

What Actually Breaks in AI Transformations

Over the last several years, I’ve been directly involved in building, integrating, and operating AI-driven decision systems inside environments where mistakes were not theoretical. These were not demo environments or innovation labs. They were production systems touching payments, fraud, risk, compliance, and customer outcomes, systems where false positives cost revenue, false negatives created regulatory exposure, and delays eroded trust internally long before customers ever noticed.

Across these initiatives, I noticed a pattern that became impossible to ignore. When AI programs struggled or failed, leadership discussions almost always drifted toward technical explanations. We questioned the data, the features, the training approach, or the sophistication of the model. We debated whether we needed different algorithms, more data scientists, or better tooling. Occasionally, we blamed execution. Rarely did we question the structure of the organization itself.

Yet when I looked closely at where friction actually emerged like where teams stalled, where trust eroded, where deployments froze, it was almost never because the model was fundamentally incapable. It was because the organization did not know how to operate a system whose behavior was probabilistic, adaptive, and continuously evolving.

This is the core claim of this paper: AI transformation fails not because organizations lack intelligence, but because they try to run AI systems inside operating models designed for deterministic software and human certainty. Until that mismatch is addressed, improvements in model quality only mask deeper structural problems.

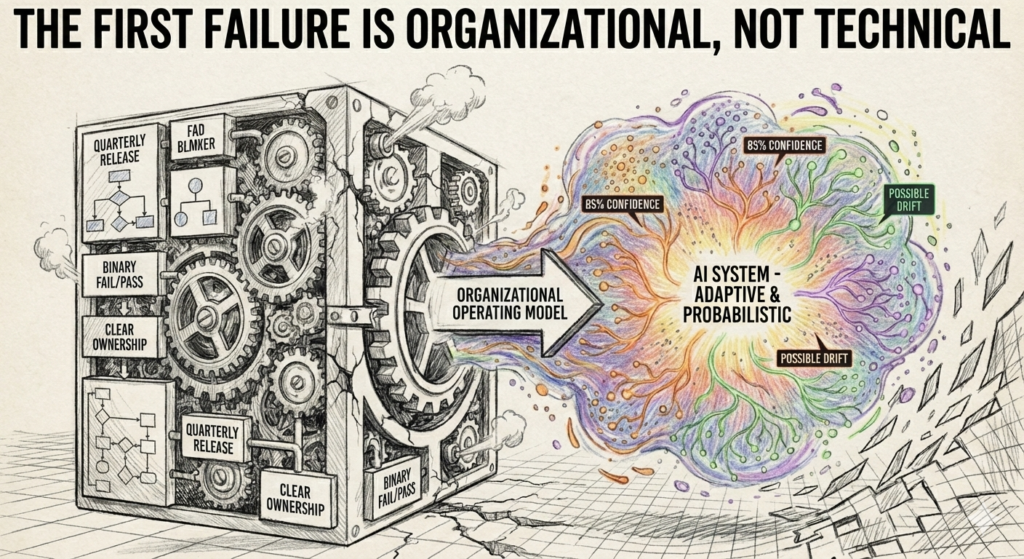

The First Failure Is Organizational, Not Technical

One of the earliest AI systems I worked on was designed to assist high-stakes operational decisions. The model itself performed reasonably well in offline testing. Accuracy metrics were within acceptable thresholds. Precision and recall were improving with each iteration. On paper, the system was viable.

The real failure emerged only after deployment.

Operational teams immediately began asking questions that the organization was unprepared to answer. When the system flagged a case incorrectly, no one knew who was accountable. Engineering insisted the model behaved as trained. Product pointed to agreed-upon requirements. Risk teams questioned whether the decision should have been automated in the first place. Operations escalated incidents that were not technically incidents but judgment conflicts between humans and machines.

What became clear very quickly was that we had introduced a new actor into the decision chain without redefining the chain itself.

Traditional software fits neatly into organizational hierarchies. When something breaks, there is a clear owner. A bug is either present or not. A system is either available or down. Escalation paths are well understood. AI systems do not behave this way. They operate in shades of confidence. They are “mostly right” in ways that are hard to reason about when outcomes matter.

The organization, however, was still operating under deterministic assumptions. Approval workflows assumed certainty. Incident processes assumed binary failure. Performance metrics assumed stability over time. None of these assumptions held. This disconnect created friction that no amount of model tuning could resolve.

Why “Better Models” Became the Wrong Question

When early issues surfaced, the instinctive response from leadership was to push for technical improvement. We asked whether we could improve accuracy, add more features, or retrain more frequently. These were reasonable questions but they were also incomplete.

The real issue was not whether the model could be improved. It was whether the organization had the capacity to absorb uncertainty.

Human decision-makers are inconsistent, biased, and often wrong. Yet organizations tolerate this because human judgment is socially legible. When a human makes a mistake, we can interrogate intent, context, and reasoning even if that reasoning is flawed. When a model makes a mistake, especially one that contradicts human intuition, the organization experiences it as a loss of control.

I saw this play out repeatedly. Early wins created optimism, but the first high-visibility error triggered a collapse in trust that far exceeded the actual impact of the mistake. Decisions that had been quietly automated were suddenly pulled back. Manual reviews were reintroduced. Escalation layers multiplied. The system did not fail technically; it failed politically.

Research has consistently shown that trust in automated systems is fragile and asymmetrical, hard to earn and easy to lose. But what most organizations underestimate is that trust is not a property of the model; it is a property of the operating model surrounding it. Without explicit structures for handling disagreement, error, and uncertainty, trust erosion is inevitable.

(Source: Lee & See, “Trust in Automation”)

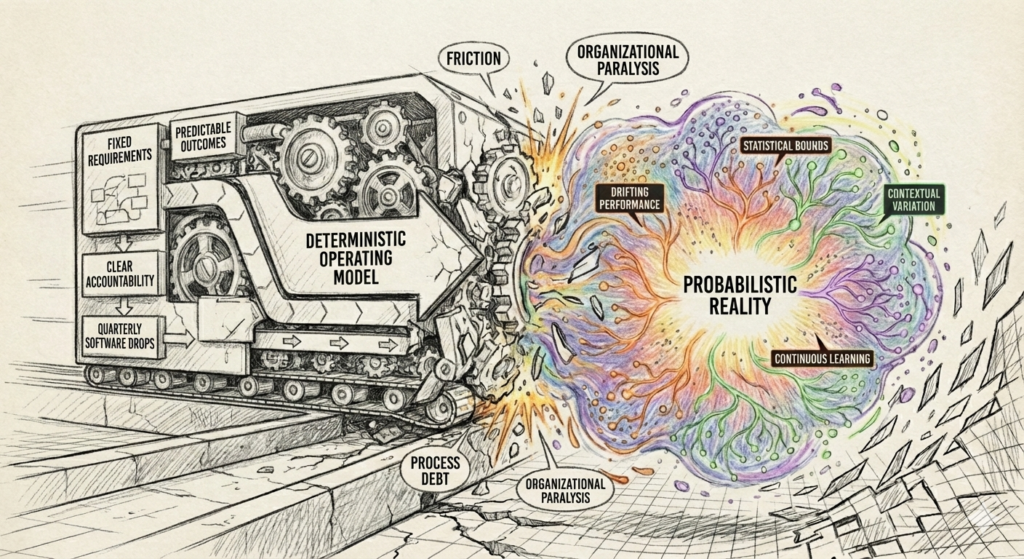

Deterministic Operating Models Collide with Probabilistic Reality

Most modern organizations are optimized for deterministic execution. Requirements are documented upfront. Acceptance criteria define success. Delivery milestones assume predictable outcomes. Accountability is aligned to outputs, not behaviors.

AI systems violate these assumptions by design.

A model does not “meet requirements” in the traditional sense. It behaves within statistical bounds. Its performance varies across segments, time, and context. Drift is not a bug, it is a natural consequence of interacting with a changing environment.

Yet I repeatedly saw organizations attempt to force AI systems into deterministic governance structures. Models were required to meet static thresholds before deployment, even when those thresholds were arbitrary. Change management processes treated retraining as exceptional rather than expected. Release approvals were designed for quarterly software drops, not continuous learning systems.

The result was organizational paralysis.

Teams hesitated to make improvements because every change triggered heavy governance. Conversely, when changes were made, they were large and risky because incremental iteration was operationally expensive. This created exactly the kind of brittle systems that AI is supposed to replace.

This phenomenon has been well documented in production machine learning research, particularly around “configuration debt” and “process debt” that accumulates when organizational practices lag behind system behavior

(Source: Sculley et al., “Hidden Technical Debt in Machine Learning Systems”)

But in practice, the debt is not just technical. It is organizational debt.

The Moment I Realized This Was an Operating Model Problem

The turning point for me came during a post-incident review that, on the surface, had nothing to do with AI accuracy. A model had flagged a case that a human reviewer overturned. Later, it turned out the model had been correct. The business impact was non-trivial.

The review devolved into defensiveness. Operations argued they could not trust the model. Product argued the override workflow encouraged human intervention without accountability. Engineering argued the model had behaved correctly. Risk argued the system should never have been allowed to operate autonomously.

Everyone was right and that was the problem.

There was no shared understanding of:

when human judgment should dominate,

how disagreement should be resolved,

or how the organization should learn from conflict between human and machine decisions.

We were not missing data or algorithms. We were missing an operating model capable of governing probabilistic decisions. That realization fundamentally changed how I approached AI initiatives going forward.

Decision Latency Is the Silent Killer of AI Systems

One of the earliest misconceptions I had to unlearn was the idea that model latency was the critical performance constraint in AI systems. Early technical discussions obsess over milliseconds: how fast a model can score a transaction, return a classification, or produce a recommendation. This focus makes sense from an engineering standpoint, but it distracts from a much more consequential bottleneck, the time it takes an organization to decide what to do with the output.

In practice, I’ve seen AI systems where inference happened in under 100 milliseconds, but meaningful organizational action took days or weeks. This gap between computational speed and organizational response is what I now think of as decision latency, and it is one of the most underappreciated failure modes in AI transformation.

Decision latency shows up everywhere once you know how to look for it. It appears in approval chains that were designed for quarterly releases but are now asked to govern continuously evolving systems. It appears in risk committees that meet monthly while models adapt daily. It appears in escalation paths that assume clear right and wrong answers in systems that operate in probabilities.

I encountered this most acutely when working on systems that required frequent recalibration, for fraud detection, risk scoring, or any domain where adversarial behavior evolves. The models themselves were capable of adapting quickly. Feature distributions shifted, retraining pipelines were in place, and offline performance metrics clearly showed when degradation was occurring. Yet acting on those signals required navigating a maze of approvals, documentation, and stakeholder alignment that had never been designed for this cadence.

What made this particularly dangerous was that delay itself became a form of risk. The longer the organization waited to act, the more drift accumulated, and the less representative the model became of current reality. Yet each additional control layer, originally put in place to manage risk, paradoxically increased exposure by slowing response.

This is not an accident. Most enterprises are optimized for risk avoidance through inertia. If nothing changes, nothing breaks. AI systems invert this logic. If nothing changes, performance inevitably degrades.

Research in MLOps and production ML consistently highlights the importance of rapid iteration and monitoring, but it often understates the organizational implications. Continuous delivery pipelines are meaningless if the organization governing them is structurally incapable of making timely decisions.

(Source: Google Cloud, “MLOps: Continuous delivery and automation pipelines in machine learning”)

What I learned through repeated friction is that AI transformation requires organizations to explicitly confront their tolerance for change. If every model update is treated as a high-risk event requiring executive-level scrutiny, the system will ossify. Conversely, if changes are pushed without appropriate guardrails, trust erodes just as quickly.

The solution is not fewer controls or more controls, it is different controls, designed around expected volatility rather than assumed stability. This requires a shift in how leadership thinks about risk, from preventing change to managing continuous adaptation.

Human-in-the-Loop Fails Without Incentive Redesign

Human-in-the-loop (HITL) is often presented as the safe compromise between automation and control. In theory, it allows organizations to benefit from AI while retaining human judgment for edge cases and high stakes decisions. In practice, I have seen HITL fail more often than it succeeds, not because the concept is flawed, but because the surrounding incentives are misaligned.

The first HITL system I worked on looked reasonable on paper. The model produced a recommendation with a confidence score. A human reviewer could approve, override, or escalate the decision. Overrides were logged. Feedback was theoretically available for retraining. Everyone involved believed this struck the right balance between automation and accountability.

What we failed to account for was how humans actually behave under organizational pressure.

Reviewers were measured on throughput and error rates, not on decision quality. Overrides took longer than approvals and attracted scrutiny. Escalations were seen as signs of uncertainty or incompetence. Over time, a clear pattern emerged: reviewers learned, implicitly, that the safest path was to agree with the model unless the error was obvious and defensible.

This created a dangerous illusion. On paper, humans were “in the loop.” In reality, the loop was collapsing into rubber-stamping. The organization believed it had retained human oversight, but what it had actually built was a compliance theater a process that existed to signal control rather than exercise it.

This dynamic is not unique. Studies in automation bias show that humans tend to over-rely on automated recommendations, especially when those systems are perceived as authoritative or statistically superior.

(Source: Parasuraman & Riley, “Humans and Automation: Use, Misuse, Disuse, Abuse”)

What is often missing from these discussions is the role of incentives. Humans do not override models based on abstract principles; they do so based on how overrides are rewarded or punished. If the cost of disagreement is higher than the cost of being wrong together, organizations will converge on collective error.

In later systems, we redesigned HITL not as a UX pattern, but as an incentive system. Reviewers were explicitly measured on quality of disagreement, not just alignment. Overrides were reviewed for learning, not blame. Patterns of human-model disagreement were surfaced as first-class signals, not anomalies to be suppressed.

This was uncomfortable. It required leadership to accept that human judgment would sometimes contradict models, and that this contradiction was not a failure but a source of insight. It also required protecting individuals who exercised judgment in good faith, even when outcomes were negative.

Without these protections, HITL becomes a fig leaf technically present, functionally absent.

Why Product Teams Struggle When Accountability Becomes Probabilistic

One of the most profound organizational impacts of AI systems shows up inside product teams themselves. Traditional product management is built on a set of assumptions that AI quietly dismantles. Features are expected to behave predictably. Success criteria are defined upfront. Delivery is framed as a linear progression from requirements to implementation to launch.

AI systems refuse to cooperate with this worldview.

I saw this tension surface repeatedly in roadmap discussions. Stakeholders would ask, “When will this be done?” or “Can we commit to this behavior?” These are reasonable questions in deterministic systems. In probabilistic systems, they are often unanswerable without false certainty.

Product managers found themselves caught between competing pressures. On one side, leadership wanted commitments and timelines. On the other, the reality of model behavior demanded iteration, experimentation, and humility. The result was often a compromise that satisfied no one: overly conservative deployments that delivered limited value, followed by slow, painful attempts to expand scope.

The deeper issue was accountability. In traditional products, accountability is binary. A feature either works or it doesn’t. In AI systems, accountability is statistical. A model can be “right” 95% of the time and still cause unacceptable harm in the remaining 5%.

This creates a psychological burden for product leaders that is rarely acknowledged. You are accountable not just for what the system does, but for what it sometimes does. You are asked to defend outcomes that cannot be guaranteed, only bounded.

Over time, I noticed that product teams either adapted or burned out. Teams that adapted shifted their mental model. They stopped treating roadmaps as promises and started treating them as learning agendas. They focused less on shipping features and more on shaping decision environments. They learned to communicate uncertainty explicitly, even when it was uncomfortable.

Teams that didn’t adapt struggled. They defaulted to over-specification, trying to force certainty where none existed. This slowed progress and eroded trust. Eventually, AI became seen as “too risky” or “not worth the effort,” not because it lacked value, but because the organization lacked the language and structures to manage probabilistic accountability.

This is not a tooling problem. It is a cultural and operational one.

Operating AI in Regulated Environments Without Compliance Theater

My understanding of AI transformation changed most dramatically when I began working in regulated environments where mistakes did not merely affect metrics but triggered legal, financial, and reputational consequences. In these settings, the usual Silicon Valley narratives about “moving fast” and “iterating in production” collide head-on with reality. Regulators do not care how elegant your model is. They care about whether decisions can be explained, audited, and defended months or years after the fact.

What surprised me was not the presence of regulation, but how poorly most organizations internalized what regulation actually demanded. Compliance was often treated as an external constraint, something to be satisfied through documentation, approvals, and sign-offs layered on top of an otherwise unchanged system. This led to what I now think of as compliance theater: extensive paperwork and governance rituals that created the appearance of control without meaningfully improving decision quality or accountability.

In practice, regulated AI systems force you to confront uncomfortable questions that unregulated environments can sometimes ignore. Who is responsible for a decision when it emerges from a combination of data, model logic, and human intervention? How do you explain a probabilistic outcome to an auditor who expects deterministic reasoning? How do you demonstrate consistency in a system that is designed to evolve?

Early on, I saw teams attempt to solve these problems through static artifacts. They wrote model documentation at launch and treated it as immutable. They captured snapshots of feature importance without acknowledging that these relationships would change over time. They defined approval checkpoints that froze the system in a particular state, even as the environment continued to shift.

This approach satisfied short-term audit requirements but failed operationally. Over time, the documentation diverged from reality. Models drifted. Manual overrides accumulated without being reconciled back into system understanding. When audits eventually came, the organization was forced into a reactive scramble, retrofitting explanations after the fact.

What worked better, though it required more upfront discipline, was embedding compliance into the operating model itself. Instead of asking whether a model was compliant at a point in time, we began asking whether the process by which the model evolved was compliant. This reframing changed everything.

Explainability stopped being a static report and became a continuous capability. Auditability was no longer about preserving a single version of the model, but about preserving the lineage of decisions, data, and changes over time. Governance shifted from episodic reviews to ongoing oversight embedded in regular operating rhythms.

This aligns closely with guidance from financial regulators, who increasingly emphasize governance, traceability, and accountability over specific technical approaches.

(Source: Bank for International Settlements, “Principles for Financial Market Infrastructures”)

The key insight here is that compliance is not something you add to an AI system; it is something you design the organization around. When compliance is externalized, AI systems become brittle. When compliance is internalized into the operating model, AI systems become defensible and resilient.

Ownership Collapses When Things Go Wrong, Unless You Redesign It

One of the most revealing moments in any AI initiative comes not during a successful deployment, but during the first serious failure. Not a minor accuracy dip or an edge-case bug, but a failure that forces leadership to ask, “How did this happen, and who owns it?”

In traditional systems, ownership questions are usually straightforward. A service went down. A bug was introduced. A requirement was misunderstood. Responsibility can be traced along familiar lines. AI systems, by contrast, fail in ways that expose the seams between teams.

I vividly remember a post-mortem where a model made a series of decisions that, individually, were defensible but collectively produced an unacceptable outcome. The data science team pointed out that the model behaved as trained. Engineering confirmed there were no system errors. Product argued that the behavior aligned with documented objectives. Risk teams argued that the outcome violated implicit expectations, even if explicit thresholds were met.

The discussion stalled because ownership had been implicitly distributed but never explicitly defined. Everyone owned a piece of the system, but no one owned the decision.

This is a structural problem, not a cultural one. When organizations introduce AI, they often preserve existing ownership models, assuming they will stretch to accommodate new complexity. They do not. AI systems sit at the intersection of product intent, data representation, statistical inference, and operational execution. Without explicit decision ownership, failures fall into organizational gaps.

Over time, I learned that effective AI operating models assign ownership not just to systems, but to decisions. Someone must own the question, “Should this decision be automated at all?” Someone must own the acceptable trade-offs between false positives and false negatives. Someone must own the criteria for human escalation and the authority to change it.

This does not mean centralizing control in a single role. It means making ownership explicit and visible. In the most effective organizations I worked with, decision ownership was treated as a first-class concept, discussed openly and revisited as systems evolved.

This clarity had a powerful side effect: it made it easier to shut systems down when necessary. One of the hardest calls in AI transformation is deciding when a model should be paused, rolled back, or retired. Without clear ownership, these decisions become political. With clear ownership, they become operational.

Research on production ML failures reinforces this point: organizations that lack clear accountability structures tend to delay corrective action, amplifying downstream impact.

(Source: Sculley et al., “Hidden Technical Debt in Machine Learning Systems”)

Ownership is not about blame. It is about enabling decisive action under uncertainty.

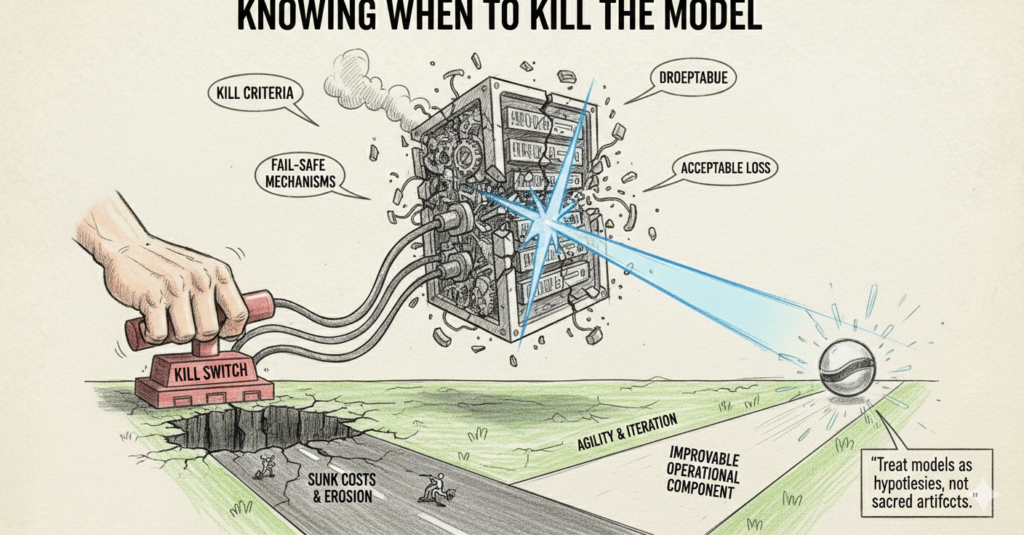

Knowing When to Kill the Model

Perhaps the most counterintuitive lesson I learned through repeated AI initiatives is that successful AI organizations are willing to turn models off. This runs against the prevailing narrative, which frames AI systems as assets that must be justified, defended, and scaled at all costs.

In reality, AI models are hypotheses encoded in software. They represent a particular understanding of the world at a particular moment in time. When that understanding no longer holds, the model is not “underperforming”; it is obsolete.

I’ve seen organizations cling to models long after warning signs were obvious. Drift metrics were trending in the wrong direction. Human overrides were increasing. Edge cases were becoming more frequent. Yet the model remained in production because no one wanted to admit failure, unwind sunk costs, or navigate the organizational fallout of rollback.

This is where operating model maturity matters most. Organizations that treat AI models as sacred artifacts struggle to let them go. Organizations that treat them as operational components considering them replaceable, improvable, and fallible, move faster and recover better.

In later initiatives, we built explicit “kill criteria” into the system from the outset. These were not vague performance targets, but concrete thresholds tied to business impact, trust signals, and operational cost. When those thresholds were crossed, the model was automatically downgraded, constrained, or disabled pending review.

This was uncomfortable, especially early on. But over time, it built credibility. Teams trusted the system more because they knew there were limits. Leadership trusted the organization because it demonstrated discipline rather than blind optimism.

This approach mirrors practices in safety-critical systems engineering, where fail-safe mechanisms are considered essential, not optional. AI systems that affect real outcomes deserve the same seriousness.

What an AI-Capable Operating Model Actually Looks Like

After seeing AI initiatives succeed and fail across different contexts, I no longer believe in generic “AI readiness” assessments. What matters is whether the organization has built an operating model that can sustain probabilistic, evolving systems without collapsing under their own complexity.

In practice, this means the organization has internalized several hard truths.

First, decisions are treated as explicit design objects. Teams know which decisions exist, which ones are automated, which ones are assisted, and which ones remain human. These boundaries are revisited regularly, not set once and forgotten.

Second, change is expected. Retraining, recalibration, and adjustment are normal operational activities, not exceptional events. Governance processes are designed to support frequent, low risk changes rather than infrequent, high-risk ones.

Third, disagreement is surfaced, not suppressed. Human-model conflict is measured, analyzed, and learned from. It is not treated as noise or failure, but as a signal about system boundaries.

Fourth, accountability is unambiguous. Every decision has an owner who is empowered to act. Escalation paths are clear. Kill switches exist and are socially acceptable to use.

Finally, leadership understands that AI systems do not eliminate uncertainty they redistribute it. Instead of pretending uncertainty can be engineered away, mature organizations build structures that absorb it.

When these conditions are present, AI stops being fragile. It becomes a durable capability, not because the models are perfect, but because the organization is.

The Question Leaders Should Actually Be Asking

Over the course of multiple AI initiatives, I’ve heard the same question repeated in different forms: “Is the model good enough to deploy?” It sounds reasonable. It feels responsible. And it misses the point.

The more important question is this: Is the organization capable of operating a system that learns, changes, and occasionally gets things wrong without panicking or freezing?

If the answer is no, then no model will ever be good enough.

AI transformation is not a technology problem waiting for a better algorithm. It is an organizational problem waiting for leaders willing to rethink how decisions are made, governed, and owned.

Until that happens, AI will remain an expensive experiment rather than a lasting advantage.

Harshil Thakkar is a Seasoned Product Leader with experience leading products end-to-end across fintech, payments, B2B SaaS, eCommerce, AdTech, Banking, Real Estate. His work spans product discovery, platform and feature development, go-to-market launches, and post-launch growth, often in regulated environments where trade-offs between speed, risk, and scale matter. He writes about real product decisions, growth inflection points, and lessons learned from building durable products.